%20(3).svg)

%20(3).svg)

Something fundamental has shifted in marketing, and most teams are only half-aware of it.

LLMs have become the most powerful production tool in the modern marketing stack. They're writing blogs, generating ad variants, and automating entire campaign workflows.

But they've also become a discovery channel. Buyers are opening ChatGPT, Perplexity, and Gemini and asking, "What's the best CRM for early-stage startups?" — and never touching Google.

This guide covers both halves: how to embed LLMs across your marketing workflow, and how to make sure LLMs recommend your product when buyers come asking.

The buyer journey doesn't start with a Google search the way it used to. Increasingly, it starts with a prompt.

In fact, 58% of consumers now turn to generative AI for product recommendations. For B2B SaaS specifically, this number is compressing the top of the funnel in ways that traditional search analytics won't capture. Your buyer might ask ChatGPT, "What's the best project management tool for remote teams?" and never open a browser tab. If LLMs don't know your product well enough to recommend it, you're invisible to that buyer — full stop.

This isn't a future trend. It's happening now, and the gap between brands building for it and brands ignoring it is widening every quarter.

You've heard of share of voice. Meet its successor: Share of Model (SOM) — how often, how prominently, and how favorably your brand appears in AI-generated responses.

Unlike Google, there's no page two in an LLM answer. If you're not mentioned, you don't exist. This reframes the entire strategic question. LLM marketing isn't just about using AI to produce content faster — it's about ensuring the content and authority you build actually registers with the models your buyers are using to make purchasing decisions. SOM is the metric that tells you how well you're doing at the second half of that equation.

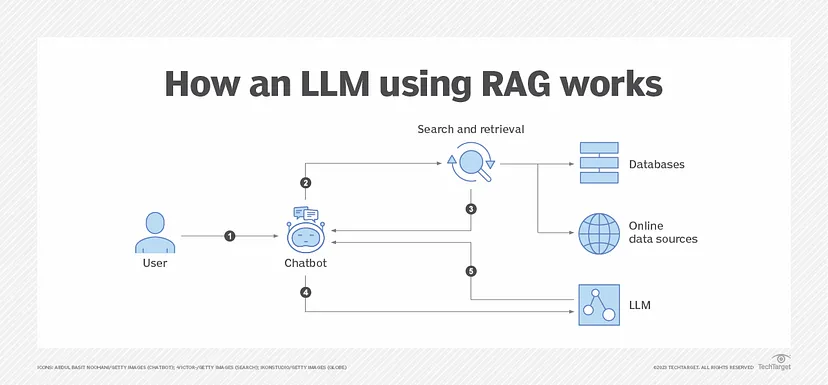

Before you can optimize for LLMs, you need to understand how they work — and more importantly, why certain brands get recommended while others don't.

LLMs like ChatGPT, Claude, and Gemini don't function like search engines. They don't rank links. They generate answers — synthesized, probabilistic responses that reflect patterns learned from enormous volumes of text. Three mechanisms drive what gets included:

Models learn from vast datasets — web content, documentation, forums, review platforms, and news coverage. This creates baseline awareness of brands, categories, and terminology. If your brand is well-represented in high-quality sources across the web, the model has more signal to draw on when a relevant question comes up.

Some models — particularly Perplexity AI and Gemini — augment their training with real-time retrieval. They pull in live sources based on perceived relevance and authority. This is why appearing in trusted publications, review platforms, and well-ranked pages matters even for LLM visibility, not just Google rankings.

Source: https://hyperight.com/3-power-ups-rag-impact-on-large-language-models/

Models favor information that appears consistently across multiple sources. Repetition builds what you might call "model confidence" — the statistical weight that makes a brand more likely to be included in a synthesized response. One excellent page isn't enough. Consistent presence across many credible sources is what drives inclusion.

The key shift: you're not optimizing for rankings anymore. You're optimizing for model confidence. LLMs don't choose the best result — they synthesize the most statistically confident answer. A single strong page won't move the needle. A consistent body of evidence across authoritative sources will.

This is where LLMs deliver the most immediate, measurable value — and where most teams start. But there's a meaningful difference between using LLMs as a drafting shortcut and building them into a systematic content production layer.

At scale, the workflow looks like this:

Claude's Projects feature is worth calling out specifically — it maintains context across sessions, which means you can train it on your brand voice, past articles, and style guidelines once and get consistent output indefinitely. For teams producing content at volume, this isn't a convenience feature; it's a production system.

The repurposing play is often underused: one well-researched long-form piece can be broken into a LinkedIn post series, an email nurture sequence, a Twitter/X thread, and a short-form video script — all within the same LLM session with the right prompting.

LLMs accelerate every stage of the SEO content workflow, but they work best when paired with purpose-built optimization tools rather than used in isolation.

A typical high-performing workflow looks like this: use an LLM to research search intent, identify subtopics, and generate an outline; layer in an SEO platform for real-time optimization scoring against top-ranking pages; then use a keyword and competitor intelligence tool for gap analysis and LLM visibility tracking.

There are many tools that can fill each of these roles, and the right combination depends on your stack and workflow. A few examples:

The common thread across all of these: LLMs handle the generative and reasoning layer, while the SEO tool grounds the output in real search data.

Here's what's changed: the best-performing content now needs to satisfy two audiences simultaneously. Google's ranking signals favor structured, specific, well-linked content. LLMs favor exactly the same things — structured, specific, factual content that clearly answers questions. Optimizing for one increasingly means optimizing for the other, which is a genuine opportunity if you approach it deliberately.

LLMs make one-to-many feel like one-to-one — when the workflow is built correctly.

The underperforming version: a marketer opens ChatGPT, asks for five email variants, copies the best one, and moves on. The high-leverage version: LLMs are embedded in the campaign production workflow, generating persona-specific email sequences, ad copy variants for continuous A/B testing, and landing page copy tailored to different audience segments — automatically, as part of a repeatable system.

The difference between one-off LLM prompts and systematic, workflow-embedded personalization is the difference between a productivity improvement and a structural competitive advantage.

LLMs are research accelerators — not a replacement for primary research, but a legitimate force multiplier for teams that know how to use them.

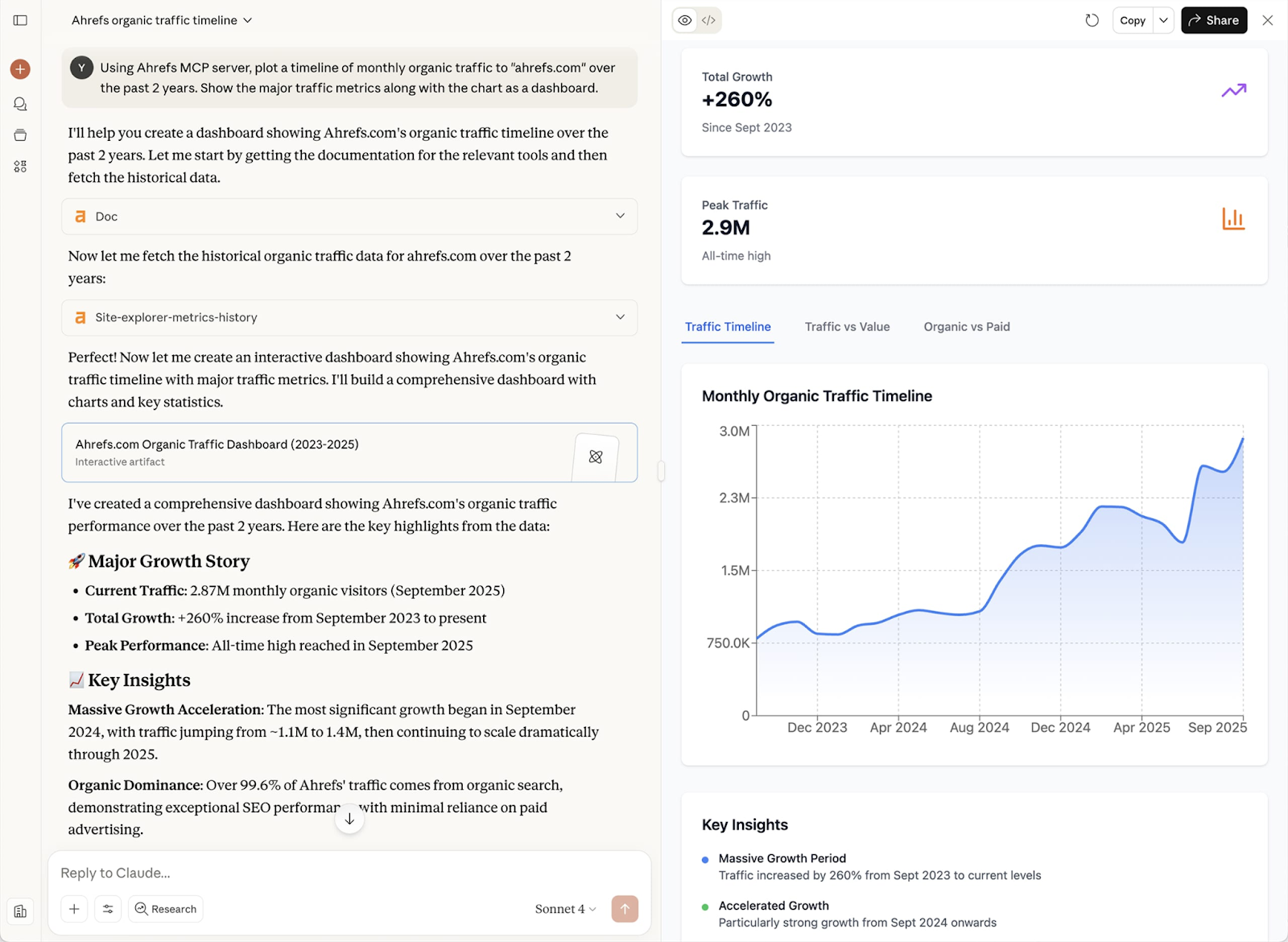

Claude's 200K context window means you can feed it an entire competitor's documentation, a competitor's pricing page, and a set of G2 reviews and ask it to synthesize a structured competitive analysis. Gemini's real-time data access makes it the right tool for trend monitoring — tracking what's being written about your category, your competitors, and emerging buyer concerns. GPT-4o is strong on generating structured competitive frameworks you can actually use as working documents.

Prompt engineering matters here more than anywhere else. The quality of your research output is directly proportional to how specific and structured your input is. Vague prompts produce vague synthesis. Structured prompts — with clear context, defined output format, and specific questions — produce analysis you can actually act on.

Using LLMs as a chat interface is a 10x improvement. Embedding LLMs into automated workflows is a 100x improvement. The difference: one requires a human to initiate every task; the other runs continuously, handling weekly competitive reports, social scheduling, lead nurturing sequences, and content repurposing without manual intervention.

Tools like Gumloop and Zapier let you connect LLMs to your existing marketing stack without engineering support. A recurring content workflow that automatically pulls trending topics, generates drafts, applies brand guidelines, and queues posts for review is achievable for most marketing teams today — and almost none are doing it.

Google rankings and LLM mentions are correlated — but they're not the same thing, and the gap matters more every quarter.

A brand can rank on page one of Google for its target keywords and still be completely absent from ChatGPT responses. LLMs pull from training data, curated retrieval sources, and pattern recognition in ways that don't map directly to search rankings. A high-ranking page that lacks specificity, third-party corroboration, or functional product descriptions will often be skipped by LLMs in favor of less-ranked content that carries more model confidence.

Optimizing for LLM visibility requires a different playbook — one that runs in parallel to, not instead of, traditional SEO.

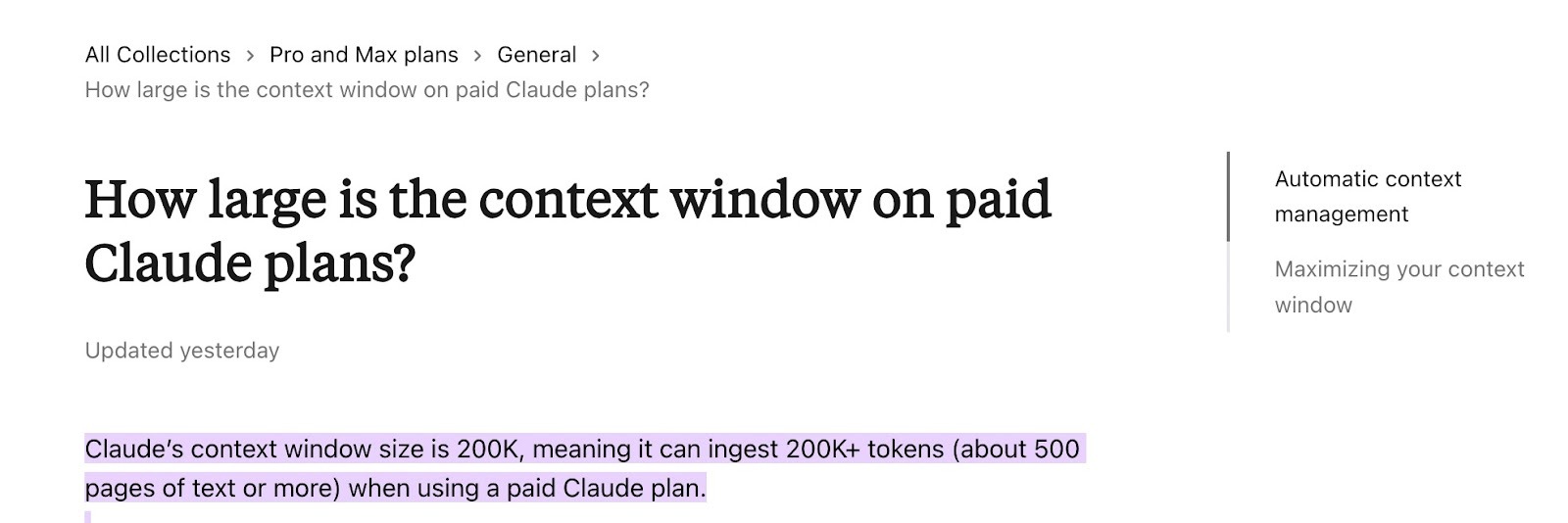

Here’s an example of how an LLM (Claude) responds to a simple user-generated question, “I'd like to buy new running shoes (female). Give me some good options.”

The LLM recommended me 5 more options alongside the 2 main winners and classified them by “Best for” criteria. The responses were based on the information Claude sourced from niche-related websites and customer/professional reviews.

Conducting an experiment like this can give you an idea about where your brand should appear to be mentioned in an LLM. Now, let’s look at it in more detail.

If you want to understand why some brands get recommended, and others don't, the answer isn't about domain authority. It's about evidence density.

LLMs favor brands that appear in: authoritative third-party sources (press coverage, analyst mentions, G2 and Capterra reviews), structured and specific product content (feature pages, use-case pages, comparison pages), and content that directly answers the questions buyers are actually asking in LLM interfaces.

Vague, aspirational marketing copy doesn't register. "The all-in-one platform for modern teams" is invisible to a model trying to answer "What's the best project management tool for engineering teams?" Precise, functional descriptions of what your product does, who it's for, and how it compares to alternatives — those register.

Specific tactics that build LLM visibility:

The goal across all of these is the same: build a body of third-party evidence that LLMs can draw on with confidence when a relevant question appears.

You can't manage what you can't measure — and LLM visibility is still a nascent measurement discipline. But the tooling is catching up.

Scrunch is currently the most capable purpose-built option for tracking brand visibility across ChatGPT, Gemini, Perplexity, and other AI platforms. Unlike SEO incumbents that bolt AI monitoring onto keyword-centric architecture, Scrunch was built AI-first — so you can track custom prompts, filter by persona, funnel stage, competitor, and region, and see not just whether you appear but how you're positioned across models.

Manual prompt testing remains essential: run your category's most common buyer questions across multiple LLMs on a regular cadence and document where you appear. This is low-tech, but it's the ground truth.

Sentiment monitoring is the underused layer — not just whether you're mentioned, but how. Are you positioned as a leader, a budget option, or a specialist? LLMs inherit the sentiment of the sources they draw from, which means how you're described in reviews and press coverage shapes how you're described in AI responses.

The brands building monitoring habits now will have a structural advantage over those who start later. The data compounds over time.

If you'd rather have experts handle the strategy and execution end-to-end, Seen is an AI SEO agency built specifically for SaaS teams. They run a unified visibility strategy across Google and LLMs — from content and on-page optimization to AI search tracking and authority-building — and embed directly with your team to make it happen.

Understanding the what of LLM visibility is useful. Understanding the why — the decision logic behind recommendations — is what turns tactics into strategy.

The core reframe: LLMs decide what to include, not what to rank. The factors that drive inclusion:

If your brand is mentioned favorably across G2, a TechCrunch feature, three industry comparison posts, and a Reddit thread in your category, the model has a high consensus signal to draw on. This is the principle behind what's increasingly being called "consensus engineering" — the deliberate, systematic effort to ensure your brand's positioning appears consistently across many sources.

Brands with clear use cases and specific feature descriptions appear more often than brands with broad, vague positioning. If your product page says "AI-powered workflow automation for RevOps teams," it's more likely to appear in a response to "What's the best workflow tool for RevOps?" than a page that says "The future of work."

LLMs don't rank for keywords — they respond to prompts. The most common evaluative prompts follow recognizable patterns: "best tools for [use case]," "alternatives to [competitor]," "[Product A] vs [Product B]." Build content that directly mirrors these patterns, and you're optimizing for the actual queries your buyers are using.

Models need to know what category you're in and who you compete with. Unclear or overly differentiated positioning — "we're not really a CRM, we're a relationship intelligence platform" — can result in no recommendation at all, because the model can't confidently assign you to a relevant category.

The more sources carrying your brand's signal, the higher the likelihood of inclusion. This isn't about duplicate content — it's about strategic distribution across platforms that LLMs treat as credible.

Distribution, in the LLM era, isn't just about traffic. It's about signal building.

Review platforms: (G2, Capterra, TrustPilot) carry outsized weight because they're structured, aggregated, and widely trusted. A complete, specific, positively reviewed G2 profile is one of the highest-leverage LLM visibility investments a SaaS company can make.

Editorial content: listicles, comparison posts, niche industry blogs — reinforces positioning in exactly the formats LLMs pull from most often. A "10 best tools for [your category]" post from a credible publication is more valuable than almost any piece of owned content for LLM visibility purposes.

Communities: (Reddit, Hacker News, Discord) contribute real, unfiltered language about how people think about your product and category. Models learn from this. If your product is discussed accurately and favorably in community threads, that signal feeds into LLM responses.

Owned content: particularly comparison pages, alternatives pages, use-case pages, and FAQ content structured around actual buyer prompts — is what you control directly. Think of it as expanding your "comparison surface area": the more entry points you create into category comparisons and evaluative queries, the more opportunities you create for LLMs to include you.

The key takeaway: distribution builds model confidence, not just traffic. These are no longer separate concerns.

Here are the models worth knowing and what each is actually good for.

Claude is the strongest choice for long-form content, strategic documents, and any work where brand voice consistency and factual accuracy matter. The Projects feature maintains context across sessions, and the 200K context window means it can process full strategy documents without losing coherence. Lower hallucination rates make it a safer choice for regulated industries or any content where errors carry real costs.

GPT-4o remains the best all-around model for speed and versatility. It's the strongest choice for short-form copy, A/B variants, structured outputs, and integration with third-party tools. Most generative workflow tools are built on GPT-4o, making it the default for teams automating high-volume content production.

Gemini earns its place through real-time data access and deep Google Workspace integration. For research-heavy content and trend-driven pieces, it's unmatched. Creative tone is less polished than Claude or GPT-4o, but if your content workflow involves pulling live data, Gemini belongs in the stack.

LLaMA is the choice for enterprise teams with technical resources who need self-hosted, privacy-first infrastructure. Fine-tuning to your brand voice, product context, or compliance requirements is possible in ways hosted models can't match — but it requires engineering investment to implement.

DeepSeek has emerged as a serious contender for cost-conscious teams that don't want to sacrifice capability. Its reasoning model performs surprisingly well on structured analytical tasks — competitive research, content frameworks, and data-heavy briefs — at a fraction of the compute cost of comparable frontier models. For teams running high volumes of research-oriented prompts, DeepSeek offers a compelling price-to-performance ratio. Worth noting: given its Chinese origins, teams handling sensitive data or operating in regulated industries should review their data privacy requirements before adopting it at scale.

Most teams start with one LLM and treat it as a universal tool. The teams getting the most leverage use a deliberate stack: Claude for strategy and long-form, GPT-4o for production and automation, Gemini for research, and DeepSeek for cost-efficient analytical work at volume. The goal isn't finding one model that does everything adequately. It's matching the model to the task — and building a system around that matching logic.

Think about it in three layers:

The goal is to get to Layer 3. One repeatable, automated workflow that produces real outputs beats ten tools used sporadically.

Start with the highest time-cost task in your current marketing operation — not the most exciting one.

If it's content production: Claude or GPT-4o with a well-built system prompt that carries your brand voice and editorial guidelines. If it's research and competitive analysis: Gemini or Claude with structured prompt templates you can reuse and refine. If it's distribution and repurposing: Gumloop or Zapier connecting your content tool to your social and email platforms.

One embedded, repeatable workflow beats ten tools used occasionally. Start narrow, get it working, then expand.

For teams just beginning to optimize for LLM recommendations, three actions take under a day and give you a baseline to build from:

This isn't a one-time exercise. Make it a monthly habit. The discipline compounds.

Not sure where to start, or looking to move faster? Seen works with SaaS teams at exactly this stage — turning a visibility baseline into a compounding growth system across both Google and LLMs.

The double shift is real, and it's accelerating. LLMs are simultaneously the most powerful production tool available to marketing teams and an increasingly important discovery channel for the buyers those teams are trying to reach.

The teams winning in 2026 are doing both — using LLMs to produce better content faster, and building the body of evidence that causes LLMs to recommend them when it matters. Neither strategy works without the other. Together, they compound.

If you want a partner who can help you build both sides of that equation, Seen works with SaaS teams to grow visibility across Google and LLMs and turn it into a pipeline. Book a strategy call to see where you stand.

LLM marketing refers to two interconnected practices: using large language models (like Claude, GPT-4o, and Gemini) to produce and automate marketing content and workflows, and optimizing your brand so that those same LLMs recommend you when buyers use AI interfaces to research products. The most effective LLM marketing strategies address both dimensions simultaneously.

Traditional SEO focuses on ranking web pages in search engine results so human users click through to them. LLM optimization (sometimes called GEO — Generative Engine Optimization, or AEO — Answer Engine Optimization) focuses on ensuring your brand appears in AI-generated responses in tools like ChatGPT and Perplexity. The two disciplines overlap — authoritative, structured content performs well in both — but they require different tactics. SEO prioritizes backlinks and keyword placement; LLM visibility prioritizes consistent third-party presence, specific product descriptions, and content that mirrors the prompts buyers actually use.

LLM stands for Large Language Model — a type of AI system trained on large volumes of text data to understand and generate human language. Examples include GPT-4o (OpenAI), Claude (Anthropic), and Gemini (Google). These are the foundation models powering most AI writing tools, chatbots, and AI search interfaces in use today.

MLM stands for Multi-Level Marketing — a sales and distribution model where participants earn income from their own sales and the sales of people they recruit. LLM stands for Large Language Model, a category of AI. These are entirely unrelated terms that happen to share similar letter patterns. In a marketing context, LLM always refers to the AI technology.

An LLM marketing strategy is a plan that covers how a marketing team will use large language models to improve the efficiency and quality of their work (content production, personalization, research, automation), and how they'll ensure their brand is visible and recommended in AI-generated responses that buyers use to make purchasing decisions. A complete LLM marketing strategy defines which models to use for which tasks, how those models are embedded into repeatable workflows, how LLM visibility will be tracked, and what actions will be taken to improve Share of Model over time.

.webp)

With 10 years of writing experience and a deep interest in SEO, AI, and content management, Anna’s here to break down big ideas and translate them into plain English for readers of all levels. She's got a Master's Degree in International Information and is a lifelong learner of writing and storytelling. Outside of work, she’s on the yoga mat, in a good book, or planning her next adventure with family and friends.