In traditional SEO, competitive analysis was straightforward: open Ahrefs, see what your competitors ranked for, track where they were gaining ground. AI search offers none of that transparency by default.

When ChatGPT, Perplexity, or Gemini answers a buyer's question, it doesn't rank 10 blue links — it synthesizes a response and cites a handful of sources. There's no Search Console for that. No rankings feed. No opt-in. The citations are happening whether you're watching or not.

This article breaks down the best tools for analyzing competitors in AI search, what to look for before you buy, and how to turn competitive intelligence into actual strategy.

On paper, most monitoring tools look similar. In practice, Scrunch's core differentiator is what they call customizable dimensions — the ability to layer personas, funnel stages, custom tags, topics, regions, and competitors simultaneously to filter insights in ways other tools structurally can't.

That matters for competitive analysis because the useful question is rarely "how does Competitor X compare to us overall?" It's "where is Competitor X being cited for bottom-of-funnel prompts among enterprise buyers in North America, and why?" Scrunch is built to answer that without requiring a vendor request or custom build.

The platform tracks 7+ major AI platforms including ChatGPT, Claude, Perplexity, Gemini, Google AI Overviews, Meta AI, and Google AI Mode. The GA4 integration connects citation data to real traffic behavior, which matters for proving competitive intelligence actually translates to business impact. Site Maps give a global view of how AI traffic is consuming your site and where it's getting stuck — useful context when you're trying to understand why a competitor's pages are being cited and yours aren't.

AXP (Agent Experience Platform) is Scrunch's most ambitious differentiator: rather than generating more AI-written content, AXP delivers a parallel, machine-readable version of your site specifically built for AI agents at the CDN layer — preserving the human experience while giving AI bots clean, structured, brand-safe content to ingest.

Starting price: $300/month

Ahrefs Brand Radar's competitive intelligence advantage is its data foundation: 300+ million search-backed prompts drawn from real user queries, not synthetic ones. That distinction matters — the prompts in Brand Radar reflect how buyers are actually behaving, which means the competitive benchmarks you're seeing are grounded in real search behavior rather than vendor-generated questions.

The platform covers six AI indexes: ChatGPT, Perplexity, Gemini, Copilot, Google AI Overviews, and AI Mode. Zero setup means you can benchmark any competitor immediately without building a tracking project first — useful for quick competitive research before a pitch or planning cycle. The addition of YouTube, Reddit, and TikTok mention tracking is genuinely useful for upstream competitive intelligence: demand is often shaped on those platforms before it becomes an AI citation, and seeing where a competitor is building audience gives you early signal.

For teams who want to track specific high-value prompts, Brand Radar now offers custom prompt tracking as an add-on at $50/month for 80 custom prompts — a relatively accessible way to monitor targeted competitive queries on top of the broader database. If you're already on Ahrefs, the free plan gives you limited access to core tools including Brand Radar, making it a low-friction starting point before committing to a paid index.

Starting price: $199/month per AI index; custom prompts add-on at $50/month for 80 prompts. A free plan with limited access is available.

Profound's competitive advantage for analysis is the Conversation Explorer: it surfaces real-time data on how often topics in your category are being discussed across AI platforms, which is valuable for understanding where a competitor is building share of voice before it shows up in citation data. Rather than just tracking what AI says about your competitor today, you can see which topic territories are trending — and who's currently owning them.

The citation vs. mention distinction also matters for serious competitive analysis. A citation means your competitor got a linked source reference — authority that AI models consistently reward. A mention is just a name drop. Most tools treat these as equivalent. Profound doesn't, which gives you more signal about where competitors are genuinely earning trust versus where they're being referenced in passing.

The "read/write" model closes the competitive loop: monitoring reveals where competitors are winning, and the content generation layer lets you act on that gap in the same platform. Profound platform also includes ChatGPT Shopping data — relevant for e-commerce brands tracking how competitor products appear in AI-generated shopping responses. The platform is SOC 2 Type II and HIPAA compliant, which matters for enterprise procurement cycles.

Starting price: $99/month (Starter — ChatGPT only); $399/month (Growth — 3 platforms)

For competitive analysis at the SMB level, Peec is the most accessible entry point with real depth. The Sources feature is particularly useful: it shows which specific URLs and domains AI systems cite when answering your tracked prompts — including a gap analysis identifying sources that consistently cite your competitors but not you. That gap list is essentially a prioritized outreach brief, not just a data point.

Peec tracks six AI platforms and provides daily updates, which matters because AI citation patterns can shift quickly — especially after a competitor publishes new content or earns coverage from a highly cited source. The Suggested Prompts feature auto-generates tracking prompts from your website, but for competitive work, the more useful approach is manually defining prompts where you already know competitors are winning.

Unlimited seats is a real advantage for agencies running competitive analysis across multiple client accounts. The 14-day trial means you can validate data quality before committing.

Starting price: €89/month

AthenaHQ was founded by former Google Search and DeepMind engineers and is Y Combinator-backed — pedigree that shows up in the product's technical depth. For competitive analysis, the standout is the Action Center: after you identify where a competitor is outperforming you, the platform generates specific recommendations for what to create or update, including content restructuring, FAQ additions, schema suggestions, and outreach targets. Most tools show you the gap. AthenaHQ tries to tell you how to close it.

The platform tracks 8 LLMs, offers competitor benchmarking with prompt-level granularity, and includes sentiment tracking that shows not just whether a competitor is being cited, but how AI models are characterizing them. A G2 reviewer described catching ChatGPT recommending a competitor for a use case where their own product was more suitable — that kind of competitive misinformation detection requires exactly this level of prompt-level monitoring. The agency pitch workspace lets you generate competitive AI search performance reports for prospects, which turns the tool from a cost center into a business development asset.

Starting price: $295/month (self-serve); $595/month (Growth); Enterprise custom

The strongest case for Semrush's AI Visibility Toolkit is the integration argument: if your team already lives inside Semrush for traditional SEO, adding AI competitive intelligence without switching platforms removes real friction. You can compare where you rank on Google and where a competitor is being cited in ChatGPT in the same dashboard, with reporting formats your team already knows.

The Competitor Research report lets you benchmark up to five rivals at once with side-by-side metrics for mentions, citations, and topic coverage. The "Unique" tab is particularly useful competitively: it isolates prompts where only your brand appears, showing you the territory you can defend, and conversely the prompts where you're absent and competitors aren't. The AI Traffic dashboard estimates how much referral traffic competitors are capturing from AI platforms, which helps prioritize which competitive gaps actually have business impact beyond citation counts.

Semrush One at $199/month is the better entry point if you're buying fresh — it includes both the AI Visibility Toolkit and the full SEO suite. Best for teams who already rely on Semrush and want competitive AI data without adding another platform.

Starting price: $99/month (standalone AI Visibility Toolkit per domain); $199/month (Semrush One), 7-day free trial available

Otterly is the most accessible entry point in this roundup at $29/month for the Lite plan, and it earns its place because it solves the "I need to prove AI visibility ROI before committing to an enterprise tool" problem better than most alternatives. For a startup or small agency where leadership needs to see the data before approving a larger budget, the price point removes the approval friction.

The competitive intelligence functionality covers the essentials: share of voice tracking across six AI platforms (ChatGPT, Perplexity, Google AI Overviews, AI Mode, Gemini, and Copilot), citation tracking that distinguishes between linked citations and unlinked mentions, and a GEO audit tool that analyzes 25+ on-page factors affecting AI citations. Unlimited team members and workspaces across all plans make it genuinely agency-friendly — you can run separate dashboards for each client with isolated reporting without per-workspace fees. The Looker Studio integration with pre-built templates is a practical advantage for agencies that need to deliver client-ready competitive reports without custom dashboard work.

Starting price: $29/month (Lite — 15 prompts); $189/month (Standard — 100 prompts); $489/month (Premium — 400 prompts), 14-day free trial

Did you know that today, 73% of B2B buyers now use AI tools like ChatGPT and Perplexity in their research process — and 61% of the buying journey completes before a buyer contacts a vendor?

In traditional SEO, competitive analysis meant checking keyword rankings, backlink gaps, and domain authority. All of it was measurable, comparable, and trackable in near real-time. AI search broke that model entirely.

When ChatGPT, Perplexity, or Gemini answers a buyer's question about your product category, it doesn't rank 10 blue links. It synthesizes a response and cites five to seven sources. Those citation slots are finite. The brands occupying them aren't chosen by bid — they're chosen by authority signals that most companies aren't even measuring yet.

The mechanical reason traditional tools are blind is worth spelling out. SEO tools track signals you define and opt into — keywords, backlinks, ad spend. AI search has no opt-in. The citations are happening whether you're watching or not, and the sources winning them are largely invisible to conventional SEO tooling. Ahrefs can't tell you that your competitor is being cited in 80% of ChatGPT responses to comparison prompts in your category. Neither can Semrush's traditional suite. Not because those are bad tools — they're excellent at what they were built for — but because they were built for a ranked-list world that AI search is rapidly bypassing.

Here's the competitive asymmetry risk: if your competitor is monitoring their AI search presence and you're not, they're building citation authority while you're flying blind. They know which prompts they're winning. They know where you're absent. They're filling those gaps with content. This is exactly the problem AI competitive analysis tools were built to solve.

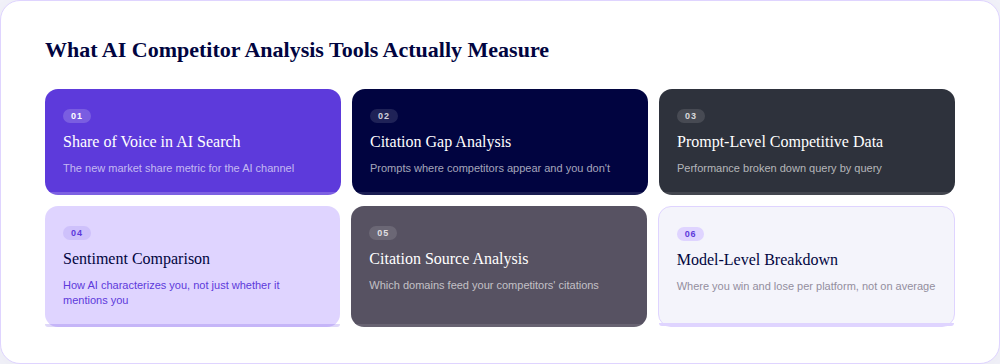

Most readers entering this category don't yet know what "good" looks like. Here are the six metrics that matter, and why each one changes what you do.

This is the percentage of relevant AI-generated responses in which your brand appears, compared to competitors. It matters competitively because it's the new market share metric for a channel where your buyers are increasingly making decisions without ever visiting your site. A competitor gaining share of voice in AI search right now is building a position that compounds — the more they're cited, the more authoritative they become to the models.

This shows you which prompts your competitors are being cited for that you're not. It's the most immediately actionable output any tool can give you, because it hands you a prioritized content brief: these are the exact queries where you're invisible and your competitors aren't. Without it, you're guessing at content priorities. With it, you're filling specific gaps with documented competitive urgency.

Rather than aggregate visibility scores, this breaks down performance query by query. It matters because winning at the category level can hide the fact that you're losing in the specific prompts your highest-value buyers are using. A tool that shows you "Competitor X has 60% AI share of voice" is less useful than one that shows you "Competitor X is dominating comparison prompts for your core use case among enterprise buyers."

AI models don't just cite brands — they characterize them. Are you being described as "a solid enterprise option" or "better suited for smaller teams"? Are you framed as a market leader or a niche alternative? Knowing how AI positions you relative to competitors changes how you brief content, PR strategy, and messaging. A positive mention count that masks negative framing is worse than no mention at all.

This tells you which external domains are feeding citations to your competitors in AI responses. It matters because those are the exact third-party publications, review sites, and forums you need to prioritize for outreach and earned coverage. Most tools that surface this data essentially hand you a PR roadmap: the sources AI trusts in your category, ranked by how often they feed your competitor's citations.

Different AI platforms cite different sources. Your competitor might dominate in ChatGPT but barely appear in Perplexity. This isn't a marginal difference — citation volumes for the same brand can differ by 615x between platforms, and only 11% of domains are cited by both ChatGPT and Perplexity. Without model-level data, you're optimizing for an average that masks where the real battles are being won and lost. As AI search matures and users develop platform preferences, model-level competitive gaps will become increasingly meaningful to track separately.

The tools below measure some or all of these things. The ones that measure all of them — and let you act on what they find — are worth the most.

Not a features list. The specific questions a skeptical marketer should ask before signing anything.

Some tools auto-suggest prompts based on your website content. That's a useful starting point, but if you can't define your own queries, you're tracking the vendor's best guess at what your buyers are asking. For competitive analysis specifically, you need to track the prompts where your competitors are winning — not the ones that feel safe. Scrunch, for example, lets you create and monitor fully custom prompts in a self-serve way, without having to wait for vendor configuration.

The minimum bar in 2026 is five: ChatGPT, Gemini, Perplexity, Claude, and Google AI Overviews. Your competitor might be strong on one platform and weak on another. Single-model tools miss the whole picture.

Aggregated visibility scores are a starting point, not a strategy. The useful data is: where are we losing to Competitor X among enterprise buyers at the comparison stage in Germany? Tools that can't slice that way leave you with averages that obscure the signal. Scrunch's customizable dimensions — personas, funnel stages, regions, custom tags, competitors — are built specifically to answer this kind of question.

Knowing your competitor is winning at 20 prompts you're absent from is useful. Knowing which pages to create or update, and having a way to act from within the platform, is better. Ask any vendor: "After I see a competitive gap, what does the tool help me do about it?" Most tools stop at the dashboard. The better ones don't.

Most of the tools worth using in 2026 offer at least a limited trial. If a vendor won't let you test the product before committing to hundreds of dollars per month, that's a question worth pressing on. As Scrunch puts it internally: parity on paper is not parity in practice. The best way to evaluate the difference is to use both products.

If your procurement team is involved, ask early about SOC 2 compliance, SSO, RBAC, API access, and multi-brand management. Several tools in this category have these; several don't. Finding out after you've built the business case is not a good use of time.

The tools that pass all six tests are the ones worth your serious attention. The ones that pass three or four are still useful — just go in knowing the tradeoffs.

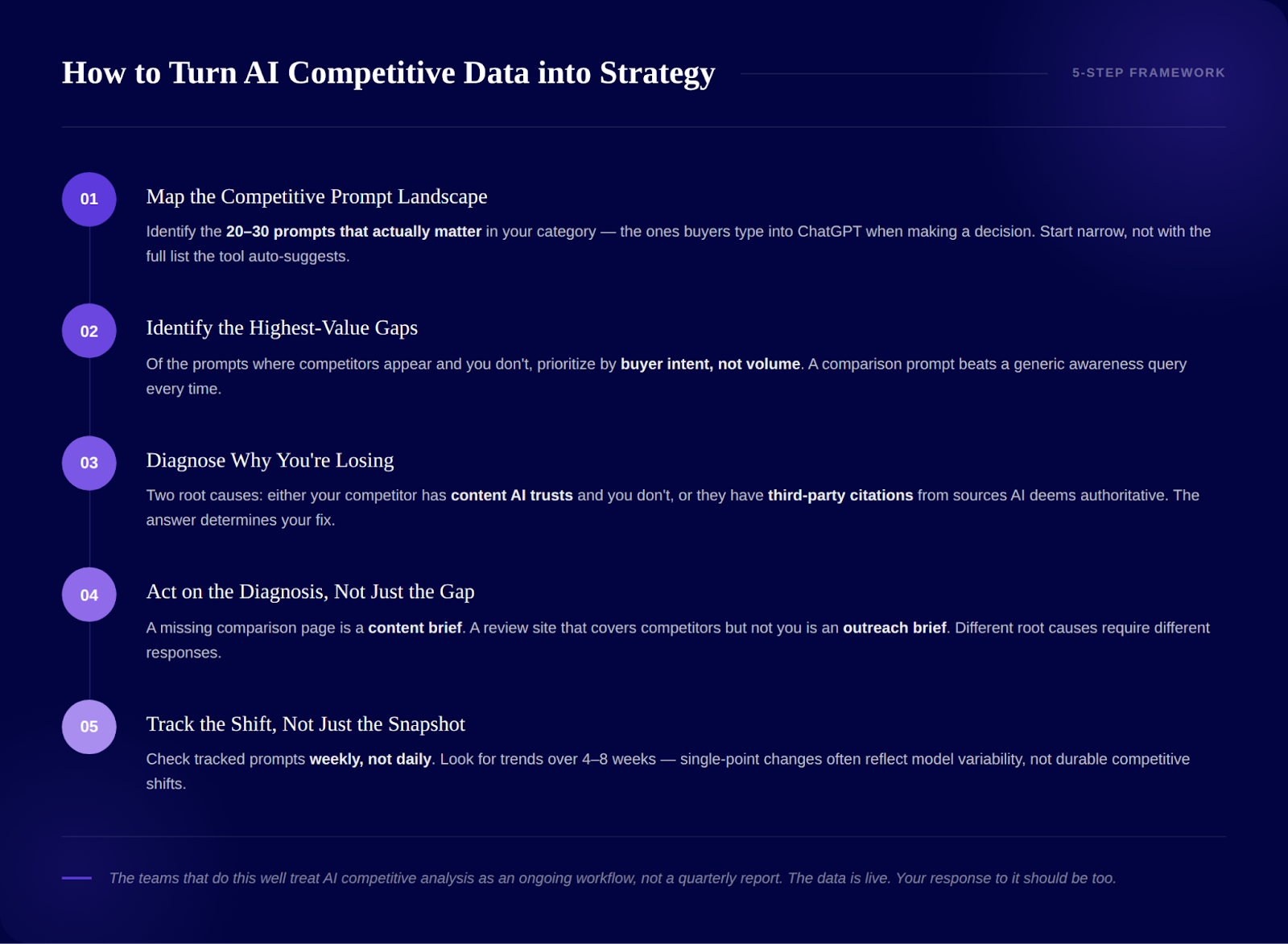

The problem isn't a shortage of competitive data — it's too much of it with no clear priority order. Here's how to cut through it.

Before you look at where you're losing, understand the territory. What are the 20-30 prompts that actually matter in your category — the ones your buyers are typing into ChatGPT when they're trying to make a decision? Start there, not with the full list the tool auto-suggests. Narrow first, expand later.

Of all the prompts where your competitor appears and you don't, which have the highest buyer intent? A comparison prompt like "X vs. Y for enterprise finance teams" is more valuable to win than a general awareness prompt. Sort by commercial intent of the query, not by volume.

There are usually two reasons: either your competitor has content that AI trusts and you don't, or they have third-party citations from sources AI deems authoritative and you don't. The source analysis features in tools like Peec, AthenaHQ, and Profound tell you which. That answer determines whether you fix this with new content or with a PR and outreach campaign.

A citation gap that exists because you lack a structured comparison page is a content brief. A gap that exists because a major review site consistently cites your competitor but has never covered you is an outreach brief. Different root causes require different responses. Tools that help you distinguish between them — like AthenaHQ's Action Center or Scrunch's site audit and optimizer workflow — compress the time from insight to execution.

Competitive AI search positions move. After you publish new content or earn new citations, it takes time for AI models to incorporate the signal. Check your tracked prompts weekly, not daily. Look for trends over four to eight weeks rather than reacting to single-point changes, which can reflect model variability rather than durable shifts.

The teams that do this well treat AI competitive analysis as an ongoing workflow, not a quarterly report. The data is live. Your response to it should be too.

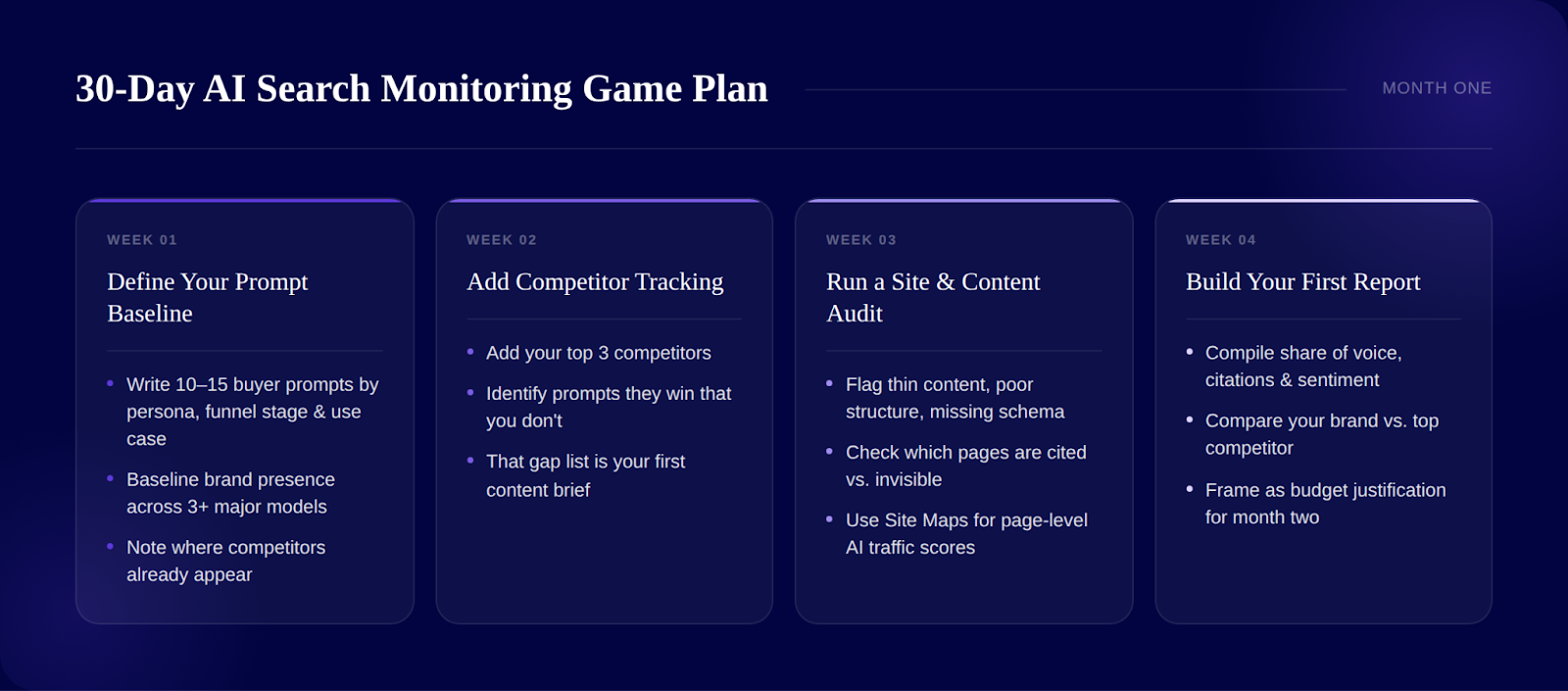

Write down 10-15 prompts your ideal buyer would type into ChatGPT. Think by persona, funnel stage, and use case. "Best [category] for [specific situation]" prompts are more useful than generic brand queries. Establish your baseline brand presence across at least three major models and note where competitors already appear.

Once you know where you stand, add your top three competitors. Where do they outrank you? Which prompts are they winning that you're absent from? That gap list is your first content brief.

AI crawlers consume content differently from Google. Look for pages with thin content, poor structure, or missing schema markup. Which of your pages are being cited in AI responses, and which aren't being picked up at all? Scrunch's Site Maps feature is particularly useful here — it gives you a global view of how AI traffic is consuming your site, where it's getting stuck, and page-level scores to prioritize fixes.

Compile your share of voice data, citation sources, and sentiment trend for your brand compared to your top competitor on the same dimensions. Present to leadership with a "here's where we are, here's where Competitor X is, here's what we're doing about it" framing. That's your budget justification for month two.

The goal in month one isn't to have a perfect strategy. It's to have data where you previously had none.

In AI search, competitive intelligence isn't optional — it's the price of admission to a channel that converts at four to five times the rate of traditional organic. The brands establishing citation authority now are building a position that will be exponentially harder to displace as AI search matures.

Your competitors may already be monitoring their AI presence — and yours. The tools exist, the data is accessible, and the window to act before citation patterns harden is open right now. Start with one tool, establish your competitive baseline, and build from there.

It depends on your use case and budget. For maximum competitive intelligence depth and configurability — especially for enterprise and agency teams — Scrunch leads with its customizable dimensions and Site Maps. For enterprise teams who also need to act on what they find through content generation in the same platform, Profound is the strongest option. AthenaHQ is a strong mid-market pick with its Action Center. For teams already using Semrush, the AI Visibility Toolkit integrates competitive AI data into a familiar workflow. And for budget-conscious teams just getting started, Otterly at $29/month is the clearest entry point.

Start by defining the prompts your buyers actually use when researching your category in ChatGPT or Perplexity. Then use a tool like Scrunch, Peec, or AthenaHQ to track which competitors appear in AI responses to those prompts and how often. The output to focus on is the citation gap: the prompts where your competitor is cited and you're not. From there, diagnose whether the gap exists because of missing content or missing third-party citations, and act accordingly. The process is more structured than most teams expect — and more actionable than traditional competitive SEO analysis, because each gap maps to a specific fix.

In traditional SEO, Ahrefs and Semrush dominate. In AI search, the purpose-built options — Scrunch, Profound, Peec, AthenaHQ, and Otterly — are built specifically for the prompt-level competitive data that SEO tools can't surface. Semrush bridges both worlds with its AI Visibility Toolkit, and Ahrefs Brand Radar extends that platform into AI monitoring with a database of 300+ million search-backed prompts. The right answer for most teams in 2026 is a combination: keep your traditional SEO competitive tool and add an AI-specific one on top.

Pick any tool from this list and add your main competitors as tracked brands. Otterly, Peec, and AthenaHQ all have self-serve trials that let you see competitor mention data in under a day of setup. Define the prompts where you'd expect competition — "best [category] for [use case]" queries — and run them across at least ChatGPT, Perplexity, and Google AI Overviews. Most tools will show you which brands appear, how often, and in what context. That's your competitive baseline. From there, the work is understanding why they're appearing — and what would need to change for you to appear instead.

Irina is a Founder at ONSAAS, Growth Lead at Aura, and a SaaS marketing consultant. She helps companies to grow their revenue with SEO and inbound marketing. In her spare time, Irina entertains her cat Persie and collects airline miles.