The AEO tool market has exploded — there are a dozen credible tools in this space now, and most look similar on paper. Both Profound and Scrunch offer AI visibility tracking, competitive monitoring, and citation analysis. Both have credible teams, real customers, and documented results.

We're focusing on these two specifically because they represent genuinely different product philosophies worth understanding before you spend a dollar on either.

Here's an honest breakdown of Profound vs. Scrunch for AEO — two tools built on different assumptions about what the discipline actually requires, so you can make a call that fits your situation.

The table below captures the structural differences at a glance. Each section that follows goes deeper into what those differences mean in practice.

If you're already comparing specific tools, you know the basics. But the framing matters here because it's what separates a surface-level feature comparison from a decision you won't regret in six months.

AEO (Answer Engine Optimization) is the practice of optimizing your content for LLMs and AI-powered search engines to surface your brand accurately when users ask questions that matter to your business. Three shifts define it:

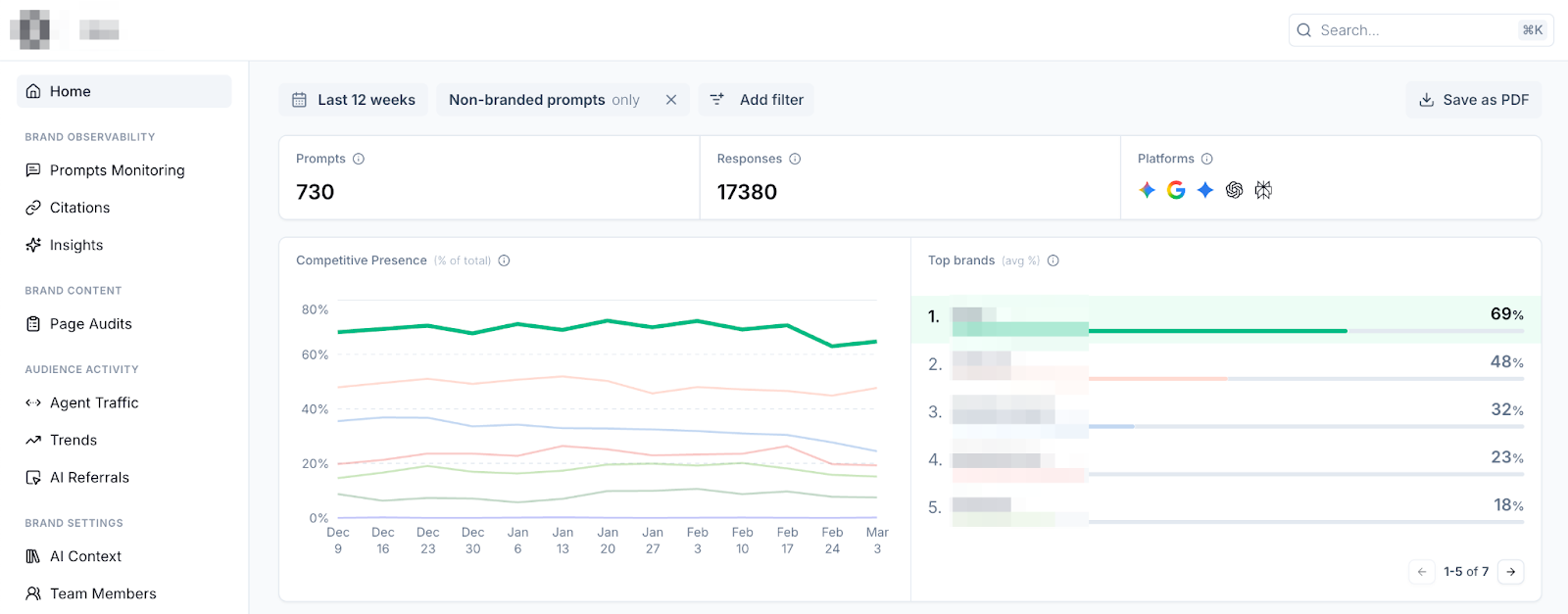

Most AEO tools look alike on the surface because the core monitoring task is similar: run prompts, record citations, and track share of voice. The meaningful differences live one layer down — in drill-down depth, prompt control, and what the platform helps you do with what it finds. That's precisely where Profound and Scrunch diverge, and it's what the rest of this article unpacks.

Neither tool is trying to do the same thing. Understanding that upfront saves you from evaluating them on the wrong criteria.

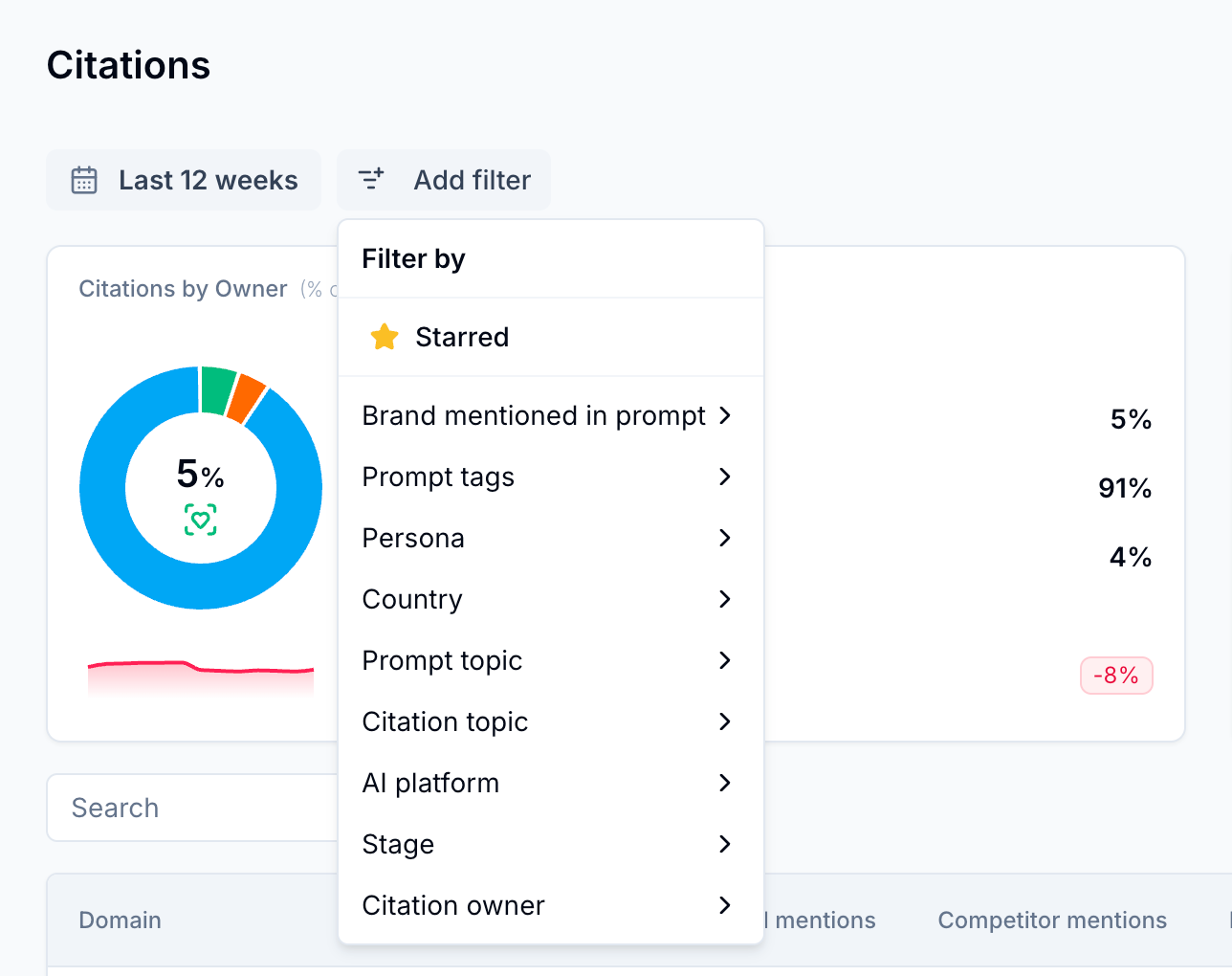

Scrunch is built for teams that want to diagnose problems and act, not just report. Everything in the monitoring taxonomy — prompts, personas, tags, funnel stages, regions, competitors — is self-defined and fully editable.

The distinction matters at scale. Without full control over how prompts are organized by tag, topic, persona, or funnel stage, managing AI search visibility across multiple product lines or regions becomes unmanageable quickly.

Practical strengths:

Scrunch covers more ground than a pure monitoring tool. Beyond tracking brand presence across eight AI platforms, it maps your entire site to surface underperforming pages, runs a deep AI audit to flag accessibility issues, JavaScript bloat, and metadata problems, then lets you run any page through its Optimizer — which automatically rewrites and reformats content for AI consumption, and then lets you serve it up directly to LLMs via AXP.

The core question this section raises: does your team need a platform that accommodates how you already work, or one that hands you a structure to work within? That question drives everything else in this comparison.

Profound is designed to help with automated clustering, predefined templates, and built-in sentiment analysis. This reduces cognitive load for large, standardized teams that need to report consistently across an organization.

The interface may be seen as complex and overwhelming, and Profound requires meaningful configuration upfront. Teams need to define the prompts, brands, and competitors they want to track before the platform begins collecting visibility data. Because the analysis is based on those tracked prompts, the quality of the initial setup strongly influences how useful the insights will be. For teams with a clear AI visibility strategy, that’s manageable. For teams still figuring out what queries or competitors matter, the entry point can feel steep.

Practical strengths:

Profound may not be the right fit for SaaS startups and B2B tech companies (better-value alternatives exist at a fraction of the price), SEO agencies that need multi-account support, and any team that needs flexible configuration and competitor benchmarking.

The real test isn't features — it's what the platform feels like when you actually need it to tell you something useful. Here's where the differences above show up in practice.

Scrunch onboarding starts from a URL. From there, the platform fetches brand details, competitor lists, relevant personas, and a starter prompt set — all editable immediately.

Prompt setup goes further than most tools: generate prompts with AI in a single click, convert existing keywords into tracked prompts, or import via bulk CSV for more advanced setups. Most teams are running real prompts within an hour.

Profound's onboarding is polished and well-guided, but operates at a higher level of abstraction. Early insights tend to be high-level and require interpretation before they translate into something a content strategist or SEO lead can act on. For large enterprise teams where reporting clarity matters more than execution speed, that trade-off is reasonable. For agencies or lean B2B teams, it adds friction at exactly the wrong moment. Here’s how they compare:

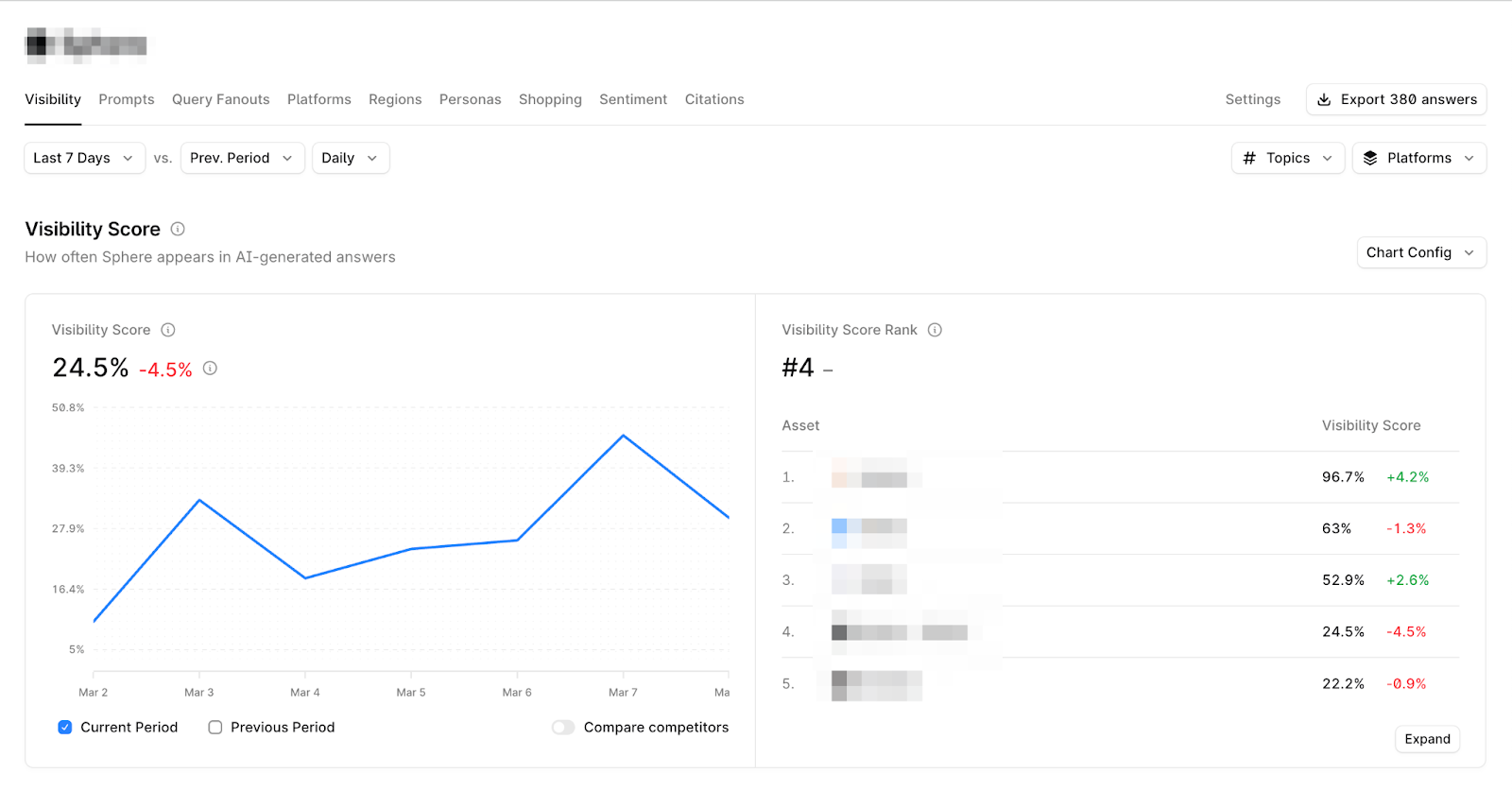

Both platforms tell you where you stand. The gap is what happens next.

Scrunch connects visibility data to specific actions: which content is being cited, which isn't, and where competitors are displacing you, prompt by prompt. In its current state, it's meaningfully ahead of a pure-monitoring setup.

Profound is stronger for reporting upward than executing downward. If your primary need is a clean dashboard for a CMO review or quarterly business report, it fits that workflow well. If you need to hand something actionable to a writer or technical SEO, the trail goes cold faster.

This is the most concrete differentiator, and the one that compounds over time.

Scrunch traces results to the source: which specific pages LLMs are pulling from, which they're ignoring, and which prompts a competitor is winning. One Reddit user who tested both tools put it plainly:

"Profound looks good on paper, but once we dug in, we weren't convinced it could actually do what the pitch deck promised. The insights felt high-level, almost like an AI-generated report of what should matter, not what was actually happening in the results. Hard to take action on that. Scrunch, on the other hand, gave us real, source-level transparency. We could literally see which exact pieces of content LLMs were pulling from — whether it was our clients' sites, third-party listings, or even competitors. That made it crystal clear what to optimize, and we saw AI visibility gains within weeks, not quarters."

Profound surfaces insights at a summary level. Understanding what's specifically driving a result requires more interpretation, and the platform doesn't offer the same source-level traceability. For teams that need to brief a writer or a technical SEO on exactly what to change, that depth gap matters.

This is the central tension between the two tools, and it deserves more than a bullet point. It's a real philosophical difference, not a quality gap.

The case for Scrunch's approach: You own the taxonomy entirely. Topics, tags, personas, funnel stages, regions, and competitor sets are all self-configured. That scales well for complex campaigns across multiple product lines, regions, or personas — a self-defined taxonomy is what makes the data usable at that level. The setup investment is real, but operators who need to slice data in non-standard ways consistently report that it pays off within weeks.

The case for Profound's approach: Automated clustering and templates reduce configuration time significantly. For large organizations with standardized reporting needs and no appetite for platform configuration, that's a genuine advantage. The ceiling is flexible — if your use case doesn't fit the predefined structure, and specifically if you need to manually control which competitors or pages get monitored, you'll find that ceiling quickly.

Neither approach is universally better. Ask yourself honestly: Does your team have the bandwidth to configure a platform, and do you need the output to reflect your specific segmentation? If yes, Scrunch's control pays for itself. If your primary output is consistent reporting across a standardized enterprise team, Profound's automation removes the friction you don't want.

At face value, referencing the feature table above:

One note that applies to both: Don't commit to annual contracts before running your own prompts through each platform. Benchmark data tells you how a tool performs in general. Your specific market, personas, and competitors tell you whether it performs for you.

Current features matter. So does the direction each company is betting on, especially if you're making a multi-year commitment.

Scrunch's thesis is that AI agents — systems that browse, research, and make decisions autonomously on behalf of users — will become the primary high-value visitors for many B2B websites. If that's right, the optimization problem shifts from ranking for humans to performing well when an AI agent is the reader.

Today's websites are built for humans: JavaScript-heavy, image-rich, animation-laden. Good for people, largely unreadable for AI systems.

The Agent Experience Platform (AXP) is Scrunch's structural answer. It operates at the CDN layer, serving an optimized version of your site designed for agent consumption without touching the human-facing experience.

When an agent visits your site, it gets a version that's accurate, structured, and complete — the kind of content that gets cited.

Profound's thesis centers on workflow: automate the path from "here's where you're underperforming" to "here's what to publish," reducing the human effort between AEO data and editorial output.

Scrunch's counterargument is backed by research. Model collapse — the documented dynamic where LLMs trained on AI-generated text progressively degrade in accuracy and coherence — is a real risk when AI-generated content floods the web at scale. A Nature study on the phenomenon found that models exposed to their own outputs lose diversity and coherence over time. Scrunch's position is that LLMs won't reward content generated this way in the long run, unless it's heavily edited or uses proprietary data inputs.

That said, the risk isn't equal across use cases. For high-volume e-commerce with standardized templates, AI-generated content at scale may work fine for now. For B2B companies where differentiated positioning is the whole game, betting on AI-generated content starts to look like a race to the bottom.

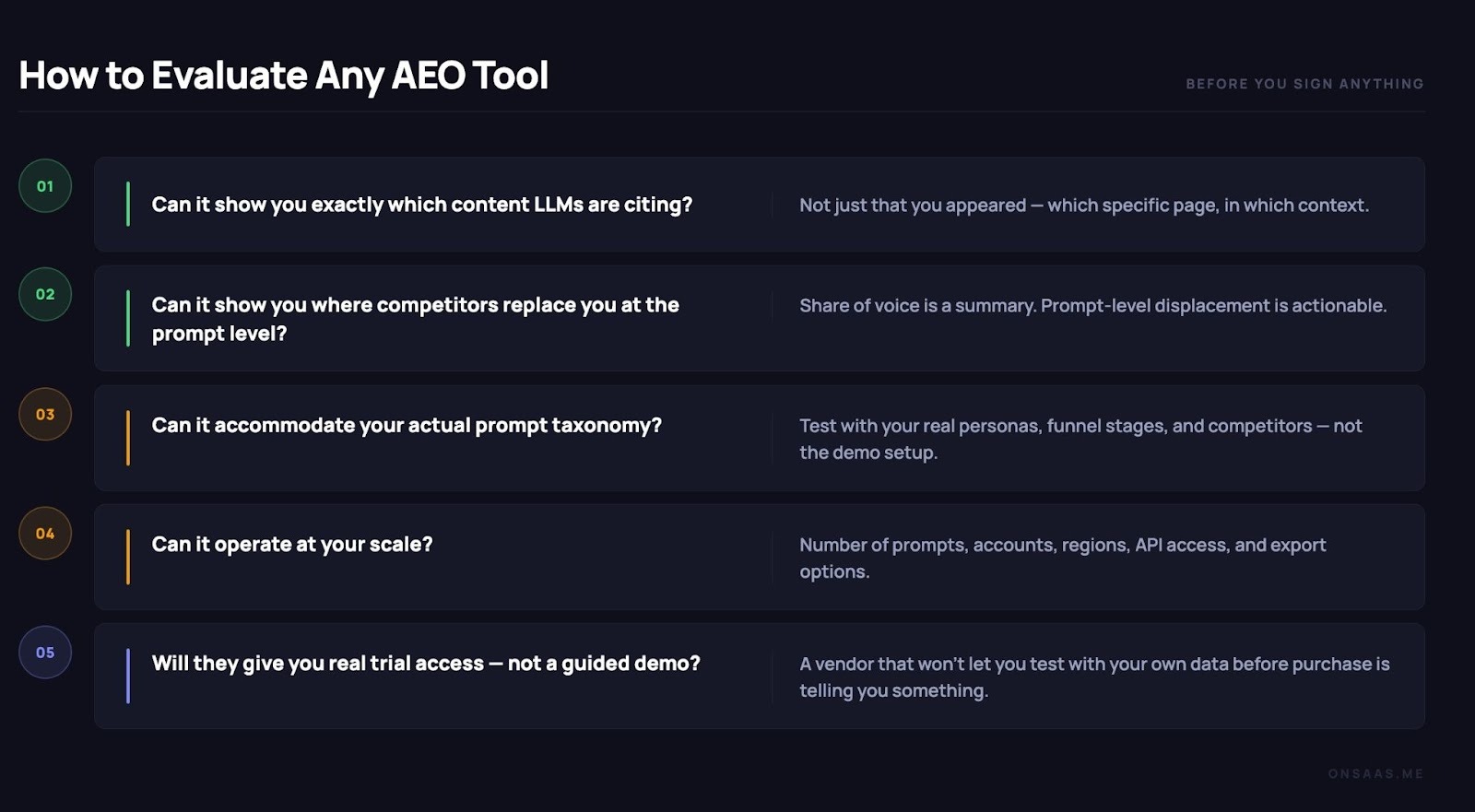

Before signing anything, run each platform against these five questions:

1. Can it show you exactly which content LLMs are citing — not just that you appeared, but which specific page, in which context?

2. Can it show you where competitors replace you at the prompt level?

3. Can it accommodate your actual prompt taxonomy — your real personas, funnel stages, and competitors, not the demo setup?

4. Can it operate at your scale — prompts, accounts, regions, API access, export options?

5. Will they give you real trial access — not a guided demo?

Neither tool fails all five questions, but the pattern is consistent.

Scrunch gives you more control, more granularity, and more flexibility at every layer. Profound gives you a cleaner out-of-the-box experience that works best when you don't need to customize much.

Profound and Scrunch are two legitimate tools making different bets on how AEO evolves. The right choice depends entirely on how your team operates and what you need the platform to do.

Choose Profound if you're an enterprise company with existing CDN infrastructure, SOC 2 requirements, and a primary need for clean dashboards and polished reporting for executive communication. The abstraction is a feature for the right team.

Choose Scrunch if you're an enterprise company, agency, or operator-oriented B2B team that needs to diagnose specific problems and act on them. The 8-engine coverage, source-level drill-down, multi-account support, and optimization features are what make the setup investment worth it.

If you're a SaaS or B2B tech company sitting somewhere in the middle, neither tool is an obvious slam dunk. Scrunch is the stronger fit for most of this audience, but the broader landscape has options worth exploring before you commit.

Run both on real prompts before you decide. Time-to-value looks different for every team, and no benchmark tells you what your own data will.

Irina is a Founder at ONSAAS, Growth Lead at Aura, and a SaaS marketing consultant. She helps companies to grow their revenue with SEO and inbound marketing. In her spare time, Irina entertains her cat Persie and collects airline miles.